Points Of Failure in Distributed System

Overview

We will look at the different points at which an application can fail in a distributed system and how to address failures. We will deliberately fail the application at these points to determine what the error looks like and how to handle it. To know how to build a good distributed system you need to understand where it can fail.

Github: https://github.com/gitorko/project57

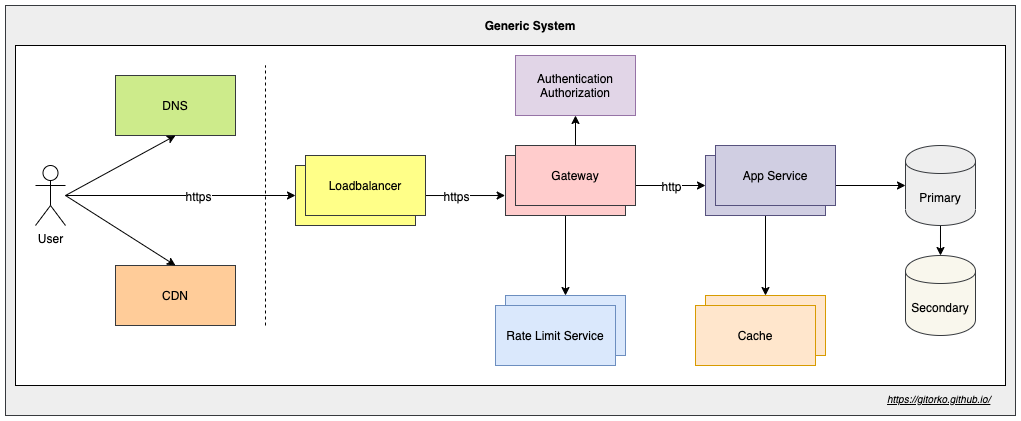

Generic System

HTTP Connections

A bad downstream client is making bad tcp connections that dont do anything, valid users are getting Denial-of-Service. What do you do?

Your server is receiving a lot of bad TCP connections. To test this make the thread count to 1 and change the connection timeout to 10 ms. Now issue a telnet command to connect to the running port, It will connect but since no data is sent the TCP connection is closed in 10 ms. Introduce a bigger timeout and try to hit the rest api when telnet is blocking the single connection, your rest api will wait till the TCP connection is free.

1server.tomcat.threads.max=1

2server.tomcat.connection-timeout=10

3

4telnet localhost 31000

The connection timeout means - If the client is not sending data after establishing the TCP handshake for 'N' seconds then close the connection.

1server.tomcat.connection-timeout=5000

Most will assume that this connection timeout actually closes the connection when a long running task takes more than 'N' seconds. This is not true. It only closes connection if the client doesnt send anything for 'N' seconds.

TimeLimiter

A new team member has updated a function and introduced a bug and the function is very slow or never returns a response. What do you do?

If a function takes too long to complete it will block the tomcat thread which will further degrade the system performance. Use Resilience4j to explicitly timeout long running jobs, this way runaway functions cant impact your entire system.

1@TimeLimiter(name = "service1-tl")

Spring also provides spring.mvc.async.request-timeout that you can explore to accomplish the same.

Always assume the functions within your service will take forever and may never complete, design accordingly.

Request Thread Pool & Connections

Users are reporting slow connection / timeout when connecting to your server? How many concurrent requests can your server handle?

There 2 types of protocol a tomcat server can be configured for

- BIO - Blocking IO, In the case of BIO the threads are not free till the response is sent back.

- NIO - Non-Blocking IO, In the case of NIO the threads are free to serve other requests the incoming request is waiting for IO to complete.

The number of threads determine how many thread can handle the incoming requests. This means default of 200 threads are ready to serve the requests

1# Applies for NIO & BIO

2server.tomcat.threads.max=200

Max number of connections the server can accept and process, for BIO (Blocking IO) tomcat the server.tomcat.threads.max = server.tomcat.max-connections You cant have more connections than the threads.

For NIO tomcat, the number of threads can be less and the max-connections can be more. Since the threads not blocked while waiting for IO to complete then can open up more connections and server other requests.

1# Applies only for NIO

2server.tomcat.max-connections: 500

The number of tomcat threads and the server hardware determine how many requests can be served in a given time interval. If you have 200 threads (BIO) and all request response on average take 1 second to complete then your server can handle 200 requests per second.

Keep-Alive

Network admin calls you to tell that many TCP connections are being created to the same clients. What do you do?

TCP connections take time to be established, keep-alive keeps the connection alive for some more time incase the client want to send more data again in the new future.

max-keep-alive-requests - Max number of HTTP requests that can be pipelined before connection is closed. keep-alive-timeout - Keeps the TCP connection for sometime to avoid doing a handshake again if request from same client is sent.

1server.tomcat.max-keep-alive-requests = 100

2server.tomcat.keep-alive-timeout = 10

Rest Client Connection Timeout

You are invoking rest calls to an external service which has degraded and has become very slow there by causing your service to slow down. What do you do?

If the server makes external calls ensure to set the read and connection timeout on the restTemplate. If you dont set this then your server which is a client will wait forever to get the response.

1# If unable to connect the external server then give up after 5 seconds.

2setConnectTimeout(5_000);

3

4# If unable to read data from external api call then give up after 5 seconds.

5setReadTimeout(5_000);

Always assume that all external API calls never return and design accordingly.

Database Connection Pool

Users are reporting slowness in api that fetch relatively small data. What do you do?

Spring boot provides Hikari connection pool by default. If there are run away SQL connections then service can quickly run out of connection in the pool and slow down the entire system.

To test this we restrict the pool size to 1 to make the error simulation easy.

1spring.hikari.maximumPoolSize: 1

By setting the connectionTimeout we ensure that when the connection pool is full then we timeout after 1 second instead of waiting forever to get a new connection.

1spring.hikari.connectionTimeout=1000

Now when you trigger the api, You will see an error, only the first query succeeds and rest will fail. Fail-Fast is always preferred than slowing down the entire service.

1Caused by: java.sql.SQLTransientConnectionException: HikariPool-1 - Connection is not available, request timed out after 253ms.

Always assume that you will run out of database connections due to a run away or storm of requests and design accordingly.

Slow Query

Users are reporting slowness in a db fetch api that fetches data from multiple tables via join. Your DBA also confirms that query is too slow. What do you do?

Slow queries often slow down the entire system. To test this we explicitly slow down a query with pg_sleep function.

We set timeout on the transaction to ensure that slow query doesn't impact the entire system, after 5 seconds if the query doesnt return result an exception is thrown.

1@Transactional(timeout = 5)

Always assume that all DB calls never return or are very slow and design accordingly.

You can further look at optimizing the query with help of indexes however here we design the backend system such that the service doesnt fail as a whole due to slow queries.

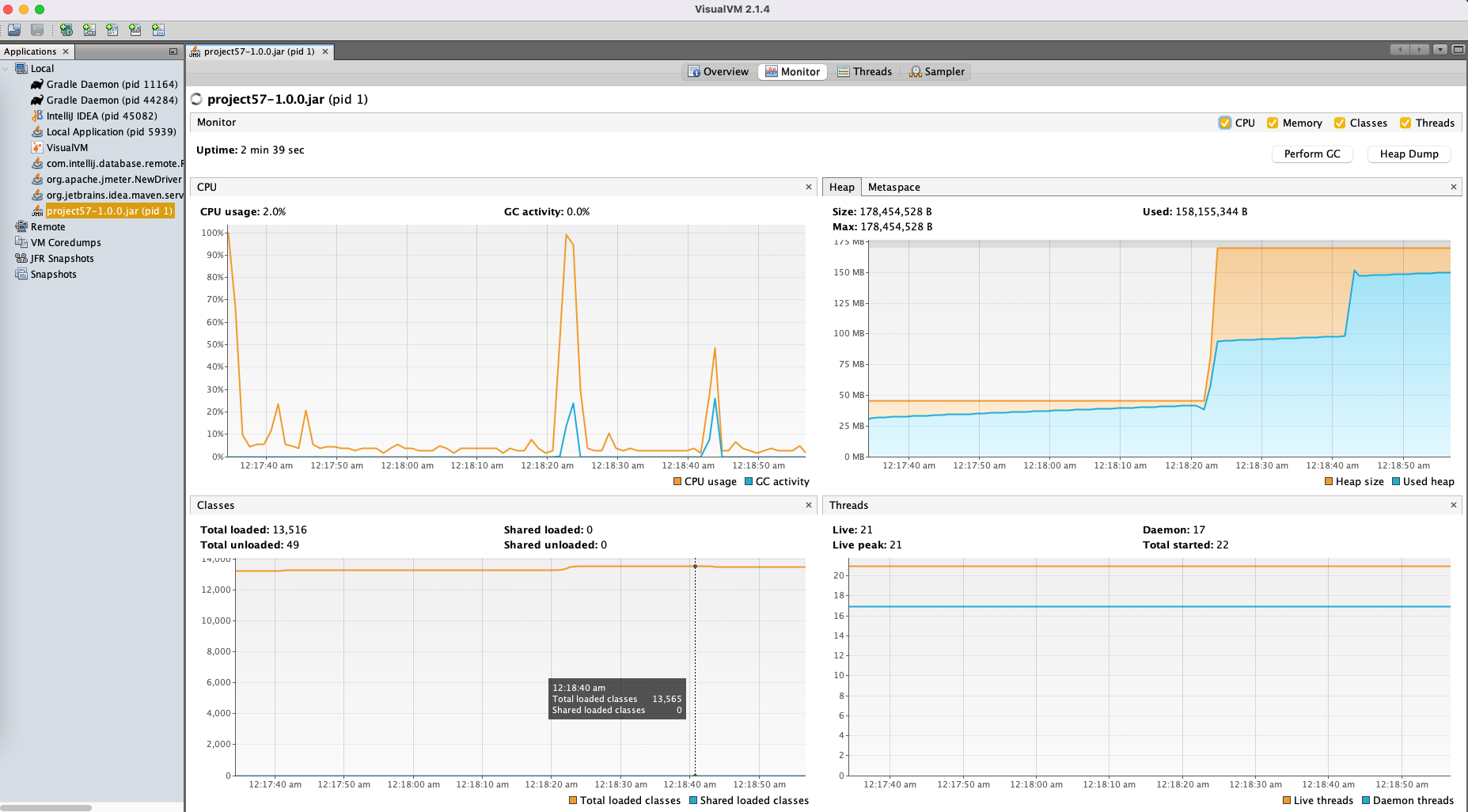

Memory Leak & CPU Spike

You have developed your service on your laptop, you tested with a big heap memory setting. However your kubernetes admin calls you to inform that kubernetes is a shared resource and you can't consume so much memory. What do you do?

Memory leaks are always hard to debug, a badly written method can cause spike in memory usage causing other services to struggle with heap memory and in turn causing lot of GC (garbage collection) which are stop of the world events.

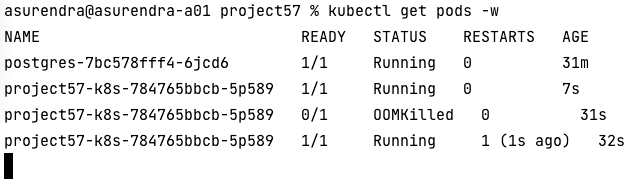

With kubernetes you can define resource limits that kill the pod if tries to use more resources than allocated.

1resources:

2 requests:

3 cpu: "250m"

4 memory: "250Mi"

5 limits:

6 cpu: "2"

7 memory: "380Mi"

Now when you invoke the api that causes a memory spike, the pod will be killed (OOMKilled) and a new pod brought up.

An OutOfMemoryError side the pod doesnt necessarily kill the pod unless some health check is configured. Pod will still remain in running state despite the OOM error. Only the resource limits defined determine when the pod gets killed.

1Exception in thread "http-nio-31000-exec-1" java.lang.OutOfMemoryError: Java heap space

Response Payload Size

Your rest api returns list of customer records, However as more customers are added in production the size of response becomes bigger & bigger and slows down the request-response times.

Always add pagination support and avoid returning all the data in a single response. Data may grow later causing response size to get bigger over a period of time.

Enable gzip compression which also reduce the size of response payload.

1server.compression.enabled=true

2

3# Minimum response when compression will kick in

4server.compression.min-response-size=512

5

6# Mime types that should be compressed

7server.compression.mime-types=text/xml, text/plain, application/json

You can also change the protocol to http2 to get more benefits like multiplexing many requests over single tcp connection.

1server.http2.enabled=true

Always try to reduce the size of the response payload. Use pagination for data records and gzip payload to reduce the size.

API versioning

A new team member has updated an existing API & introduced a new feature that was used by many downstream applications, however a bug got introduced and now all the downstream api are failing.

Always look at versioning your api instead of updating existing api that are used by downstream services. This contains the blast radius of any bug.

eg: /api/v1/customers being the old api and /api/v2/customers being the new api

Backward compatibility is very important, specially when services rollback to older versions in distributed systems. Always work with versioned API if there are major changes or new features being introduced.

Other Failures

Once you expand the distributed system there can be various other points of failure

- Primary DB failure

- Secondary DB replication failure

- Queue failures

- Network failures

- External System can go down

- Service nodes can go down

- Cache invalidation/eviction failure

- Load Balancer failures

- Datacenter failure for one region

Code

1package com.demo.project57;

2

3import com.demo.project57.domain.Customer;

4import com.demo.project57.repository.CustomerRepository;

5import org.springframework.boot.CommandLineRunner;

6import org.springframework.boot.SpringApplication;

7import org.springframework.boot.autoconfigure.SpringBootApplication;

8import org.springframework.context.annotation.Bean;

9import org.springframework.http.client.SimpleClientHttpRequestFactory;

10import org.springframework.web.client.RestTemplate;

11

12@SpringBootApplication

13public class Main {

14 public static void main(String[] args) {

15 SpringApplication.run(Main.class, args);

16 }

17

18 @Bean

19 public RestTemplate getRestTemplate() {

20 return new RestTemplate(getClientHttpRequestFactory());

21 }

22

23 //Override timeouts in request factory

24 private SimpleClientHttpRequestFactory getClientHttpRequestFactory() {

25 SimpleClientHttpRequestFactory clientHttpRequestFactory

26 = new SimpleClientHttpRequestFactory();

27 //Connect timeout

28 clientHttpRequestFactory.setConnectTimeout(5_000);

29

30 //Read timeout

31 clientHttpRequestFactory.setReadTimeout(5_000);

32 return clientHttpRequestFactory;

33 }

34

35 @Bean

36 public CommandLineRunner seedData(CustomerRepository customerRepository) {

37 return args -> {

38 for (int i = 0; i < 99; i++) {

39 customerRepository.save(Customer.builder().name("customer_" + i).phone("phone_" + i).build());

40 }

41 };

42 }

43}

1package com.demo.project57.controller;

2

3import java.time.LocalDateTime;

4import java.util.ArrayList;

5import java.util.List;

6import java.util.Random;

7import java.util.concurrent.CompletableFuture;

8import java.util.concurrent.TimeUnit;

9

10import com.demo.project57.service.CustomerService;

11import io.github.resilience4j.timelimiter.annotation.TimeLimiter;

12import lombok.RequiredArgsConstructor;

13import lombok.SneakyThrows;

14import lombok.extern.slf4j.Slf4j;

15import org.springframework.http.HttpMethod;

16import org.springframework.http.ResponseEntity;

17import org.springframework.web.bind.annotation.GetMapping;

18import org.springframework.web.bind.annotation.PathVariable;

19import org.springframework.web.bind.annotation.RestController;

20import org.springframework.web.client.RestTemplate;

21

22@RestController

23@RequiredArgsConstructor

24@Slf4j

25public class HomeController {

26

27 private final RestTemplate restTemplate;

28 private final CustomerService customerService;

29

30 List<String> names = new ArrayList<>();

31

32 @GetMapping("/api/time")

33 public String getServerTime() {

34 log.info("Getting server time!");

35 String podName = System.getenv("HOSTNAME");

36 return "Pod: " + podName + " : " + LocalDateTime.now();

37 }

38

39 /**

40 * Will block the tomcat threads and hence no other requests can be processed

41 */

42 @GetMapping("/api/echo1/{name}")

43 public String echo1(@PathVariable String name) {

44 log.info("echo1 received echo request: {}", name);

45 longRunningJob(true);

46 return "Hello " + name;

47 }

48

49 /**

50 * Will time out after 1 second so other requests can be processed.

51 */

52 @GetMapping("/api/echo2/{name}")

53 @TimeLimiter(name = "service1-tl")

54 public CompletableFuture<String> echo2(@PathVariable String name) {

55 return CompletableFuture.supplyAsync(() -> {

56 log.info("echo2 received echo request: {}", name);

57 longRunningJob(false);

58 return "Hello " + name;

59 });

60 }

61

62 /**

63 * API calling an external API that is not responding

64 * Since we don't have an external API we are using the echo1 api

65 *

66 * Here timeout on the rest template is configured

67 */

68 @GetMapping("/api/echo3/{name}")

69 public String echo3(@PathVariable String name) {

70 log.info("echo3 received echo request: {}", name);

71 String response = restTemplate.exchange("http://localhost:31000/api/echo2/john", HttpMethod.GET, null, String.class)

72 .getBody();

73 log.info("Got response: {}", response);

74 return response;

75 }

76

77 /**

78 * Over user of db connection by run-away method

79 */

80 @GetMapping("/api/many-db-call")

81 public int manyDbCall() {

82 log.info("manyDbCall invoked!");

83 return customerService.invokeAyncDbCall();

84 }

85

86 /**

87 * Slow query without timeout

88 * Explicit delay of 10 seconds introduced in DB query

89 */

90 @GetMapping("/api/count1")

91 public int getCount1() {

92 log.info("dbCall invoked!");

93 return customerService.getCustomerCount1();

94 }

95

96 /**

97 * Slow query with timeout

98 * Explicit delay of 10 seconds introduced in DB query

99 */

100 @GetMapping("/api/count2")

101 public int getCount2() {

102 log.info("dbCall invoked!");

103 return customerService.getCustomerCount2();

104 }

105

106 /**

107 * Create spike in memory

108 * List keeps growing on each call and eventually causes OOM error

109 */

110 @GetMapping("/api/memory-leak")

111 public ResponseEntity<?> memoryLeak() {

112 log.info("Inserting customers to memory");

113 for (int i = 0; i < 999999; i++) {

114 names.add("customer_" + i);

115 }

116 return ResponseEntity.ok("DONE");

117 }

118

119 @SneakyThrows

120 private void longRunningJob(Boolean fixedDelay) {

121 if (fixedDelay) {

122 TimeUnit.MINUTES.sleep(1);

123 } else {

124 //Randomly fixedDelay the job

125 Random rd = new Random();

126 if (rd.nextBoolean()) {

127 TimeUnit.MINUTES.sleep(1);

128 }

129 }

130 }

131}

1package com.demo.project57.service;

2

3import com.demo.project57.repository.CustomerRepository;

4import lombok.RequiredArgsConstructor;

5import lombok.extern.slf4j.Slf4j;

6import org.springframework.scheduling.annotation.EnableAsync;

7import org.springframework.stereotype.Service;

8import org.springframework.transaction.annotation.Transactional;

9

10@Service

11@RequiredArgsConstructor

12@Slf4j

13@EnableAsync

14public class CustomerService {

15 private final CustomerRepository customerRepository;

16 private final CustomerAsyncService customerAsyncService;

17

18 public int getCustomerCount1() {

19 return customerRepository.getCustomerCount();

20 }

21

22 @Transactional(timeout = 5)

23 public int getCustomerCount2() {

24 return customerRepository.getCustomerCount();

25 }

26

27 public int invokeAyncDbCall() {

28 for (int i = 0; i < 5; i++) {

29 //Query the DB 5 times

30 customerAsyncService.getCustomerCount();

31 }

32 //Return value doesn't matter, we are invoking parallel requests to ensure connection pool if full.

33 return 0;

34 }

35

36}

1package com.demo.project57.service;

2

3import com.demo.project57.repository.CustomerRepository;

4import lombok.RequiredArgsConstructor;

5import lombok.extern.slf4j.Slf4j;

6import org.springframework.scheduling.annotation.Async;

7import org.springframework.scheduling.annotation.EnableAsync;

8import org.springframework.stereotype.Service;

9import org.springframework.transaction.annotation.Propagation;

10import org.springframework.transaction.annotation.Transactional;

11

12@Service

13@EnableAsync

14@RequiredArgsConstructor

15@Slf4j

16public class CustomerAsyncService {

17 private final CustomerRepository customerRepository;

18

19 /**

20 * Each method run in parallel causing connection pool to become full.

21 * Explicitly creating many connections so we run out of connections

22 */

23 @Transactional(propagation = Propagation.REQUIRES_NEW)

24 @Async

25 public void getCustomerCount() {

26 log.info("getCustomerLike invoked!");

27 int count = customerRepository.getCustomerCount();

28 log.info("getCustomerLike count: {}", count);

29 }

30

31}

1spring:

2 main:

3 banner-mode: "off"

4 datasource:

5 driver-class-name: org.postgresql.Driver

6 host: localhost

7 url: jdbc:postgresql://${POSTGRES_HOST}:5432/${POSTGRES_DB}

8 username: ${POSTGRES_USER}

9 password: ${POSTGRES_PASSWORD}

10 hikari:

11 maximumPoolSize: 1

12 connectionTimeout: 1000

13 idleTimeout: 60

14 maxLifetime: 180

15 jpa:

16 show-sql: false

17 hibernate.ddl-auto: create-drop

18 database-platform: org.hibernate.dialect.PostgreSQLDialect

19 defer-datasource-initialization: true

20

21server:

22 port: 31000

23 tomcat:

24 connection-timeout: 10

25 threads:

26 max: 2

27 max-keep-alive-requests: 10

28 keep-alive-timeout: 10

29 max-connections: 5

30 compression:

31 enabled: true

32 # Minimum response when compression will kick in

33 min-response-size: 512

34 # Mime types that should be compressed

35 mime-types: text/xml, text/plain, application/json

36

37resilience4j.timelimiter:

38 instances:

39 service1-tl:

40 timeoutDuration: 1s

41 cancelRunningFuture: true

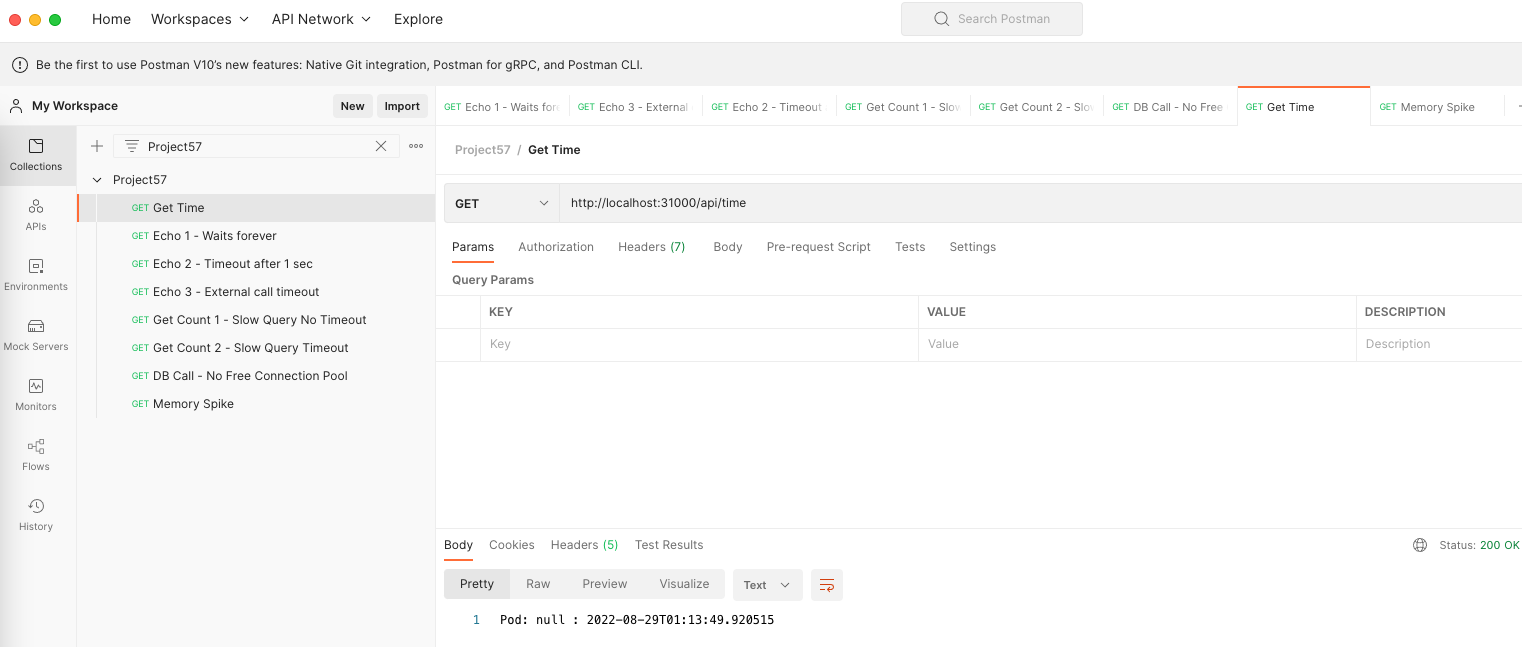

Postman

Import the postman collection to postman

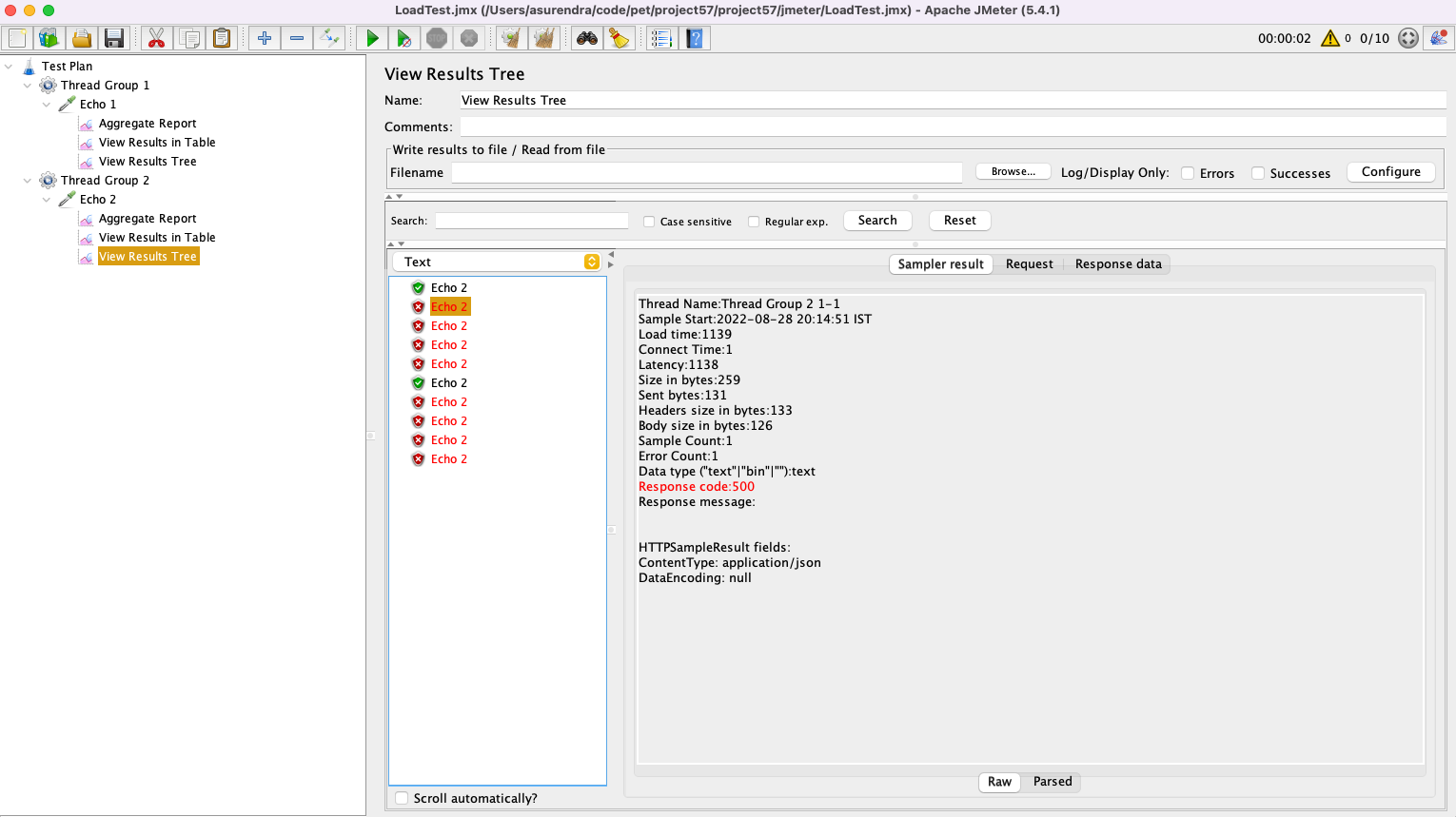

JMeter

https://raw.githubusercontent.com/gitorko/project57/main/jmeter/LoadTest.jmx

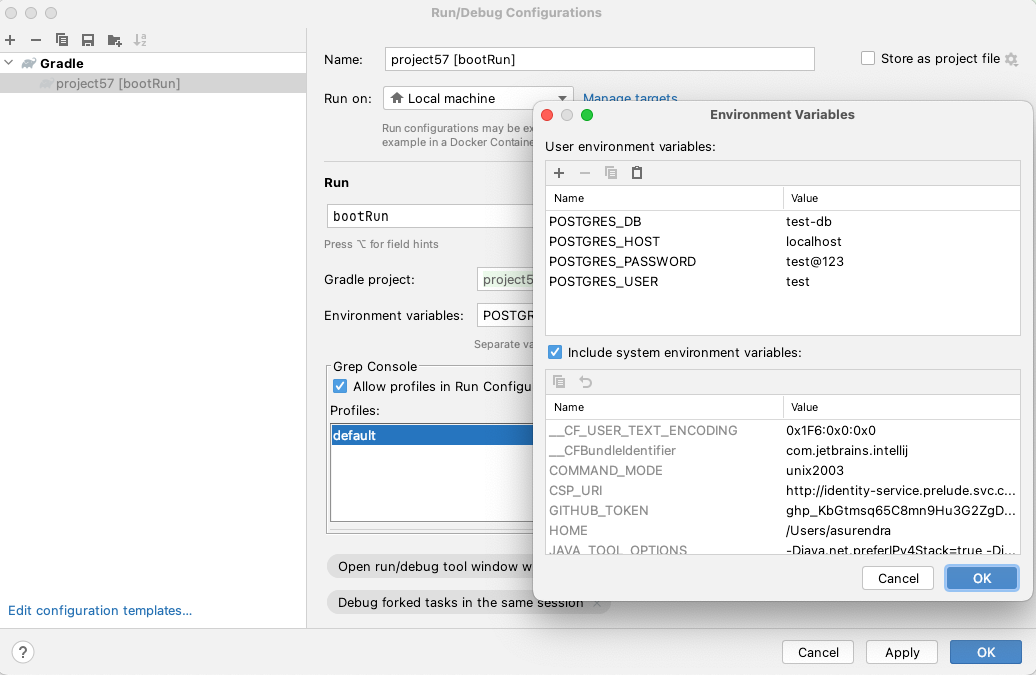

Setup

Project 57

Point of Failures in System

https://gitorko.github.io/points-of-failure/

Version

Check version

1$java --version

2openjdk 17.0.3 2022-04-19 LTS

Postgres DB

1docker run -p 5432:5432 --name pg-container -e POSTGRES_PASSWORD=password -d postgres:9.6.10

2docker ps

3docker exec -it pg-container psql -U postgres -W postgres

4CREATE USER test WITH PASSWORD 'test@123';

5CREATE DATABASE "test-db" WITH OWNER "test" ENCODING UTF8 TEMPLATE template0;

6grant all PRIVILEGES ON DATABASE "test-db" to test;

7

8docker stop pg-container

9docker start pg-container

Dev

To run the backend in dev mode.

1./gradlew clean build

2./gradlew bootRun

Kubernetes

1docker stop pg-container

2

3./gradlew clean build

4docker build -f k8s/Dockerfile --force-rm -t project57:1.0.0 .

5kubectl apply -f k8s/Postgres.yaml

6kubectl apply -f k8s/Deployment.yaml

7kubectl get pods -w

8

9kubectl logs -f service/project57-service

10

11kubectl delete -f k8s/Postgres.yaml

12kubectl delete -f k8s/Deployment.yaml